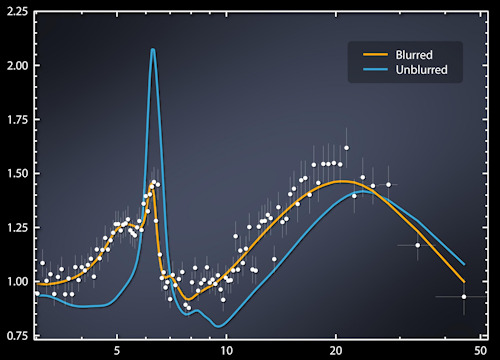

When the new H2009N1 influenza strain was discovered in the 1, the usual monitoring and observation procedure was activated in the USA, which involved reporting all cases to regional health and control centers.

A capillary and run-in procedure, which had however a limitation: it outlined a picture of the development of the virus, always two weeks late compared to the contingent situation.

In the same period, the magazine Nature published an article in which some engineers of Google, amazed and general incredulity, claimed to be able to derive and even predict the geographic spread of H1N1 based solely on the keywords used on the web.

Specifically, starting from the 50 millions of words most used on the net by US users, Mountain View gurus had identified the most used in areas reported by regional health centers, and applying 450 millions of different mathematical models were able to highlight a correlation between 45 keywords and virus expansion.

The facts confirmed the news, and for the first time it was shown that it was possible to predict the spread of a virus with purely mathematical methods, using essentially (huge) amounts of data processed by machines with adequate computing capacity.

This story is a further proof of how much the digital revolution, founded on Information Technology (IT), has revolutionized our era. From it began what is referred to as the "fourth industrial revolution", an epochal change that is developing with a width and a speed never seen before, investing a plurality of fields as never happened before.

Artificial Intelligence (AI), robotics, biotechnology, nanotechnology, internet of things (IoT), autonomous driving, quantum computing are just some of the sectors that are going through a period of continuous progress, extraordinary for the variety and depth of the results and for its development speed.

Of the acronym IT, often, we focus on the technology T, that is computers: machines more and more powerful, able to double the computational capacity every 18 months, according to a law - called Moore1 - that although lacking in scientific value, is still supported by more 50 years of observation of reality.

That said, and taking nothing away from the importance deriving from increasingly powerful machines, the real wealth today lies in the data, indeed in the BIG DATA derived from the billions of information produced every single instant by our clicks, tweets and purchase preferences.

In the first quarter of the 2018, Facebook had 2.19 billion active users2, which in turn interacted with 200 billions of other individuals in the network: a figure over 20% of the planet's population. In the same year, YouTube was one and a half billion users, followed by WhatsApp with a billion and three hundred million.

Important numbers, which produce an inexhaustible source of data.

The web, on the other hand, is an environment in which millions of people spend an important part of their daily lives (in Italy, on average 6 hours a day in 2018), exchanging opinions, emotions, pleasures, sorrows, buying preferences. and so on.

A set of individual behaviors that can be "dated", that is registered, analyzed and reorganized according to scientific criteria that continuously produce data.

Two examples, more than any other, make the idea of how the results we seek are hidden in the information.

In 2006, the AoL (Americaonline) portal has made public, for scholars and researchers, a database of 20 millions of "queries" made within three months by 675 thousand users, and did so by making anonymously anonymous, for reasons of protection , users in various ways involved. Nevertheless, after a few days, a Georgia 60-year-old widow, Thelma Arnold, was - rightly - associated with the 4417749 user number, triggering a dispute that led to the dismissal of three AoL employees.

And even when Netflix published the preferences of about half a million anonymous users, it was not long before a Midwest lady was recognized by name and address. Researchers at the University of Texas later demonstrated that it is indeed possible to recognize a channel user by choosing only 6 films on 500.

But not only the web is: we think of the cameras, everywhere in the streets and squares of our cities, and the ways and purposes with which the traces we leave can be used by special facial recognition software (a few years ago, an English newspaper he discovered that less than 200 meters from the house where he lived George Orwell, the author of the dystopian book "1984", there were no less than 30 cameras).

According to reports by Viktor Schonberger and Keneth Cukier in their fundamental work3 (used as primary source for this article), so much data was produced in the 2012 that if loaded on CD-ROM would have formed five parallel stacks able to reach the moon, while if they had been printed on sheets of paper they would have been able to cover the entire territory of the USA three times.

Note that we are talking about 6 years ago, and that in the meantime the data produced each year doubled twice more (on average, doubling every three years).

The data constitute the black gold of our era: an inestimable value, for the quantity and the multiplicity of uses, most often different from those for which they were originally taken. In fact, more and more often, we provide information online for purposes that at the time of their collection are still unknown.

The data constitute the black gold of our era: an inestimable value, for the quantity and the multiplicity of uses, most often different from those for which they were originally taken. In fact, more and more often, we provide information online for purposes that at the time of their collection are still unknown.

They feed the new frontier of the AI, constituting the first fuel: it is thanks to them that the computers progress and start to "perceive" the external reality.

They through robots begin to perform autonomous actions4, decided on the basis of situation data collected and analyzed on the outside (and not on the received programming).

But how are the BIG DATA used? Applying mathematical methods, "algorithms", elaborated on the basis of what you want to discover in a given moment, of a particular phenomenon.

The algorithms, which exploit large amounts of data, allow us to see any "correlations", understood as the probability that a given relationship between the examined elements can be repeated.

What then such ties emerge by pure coincidence, nothing detracts from the validity of the study itself, because the inaccuracy and inaccuracy are statistically "adjusted" in proportion to the number of data available.

With all due respect to the principle of causality, which was good in the era of SMALL DATA when the understanding was based on a careful analysis of the (limited) elements available, carried out by people "expert" in the particular sector under study.

In the era of BIG DATA, the understanding of phenomena is achieved instead with the help of "data scientist" - a middle ground between a programmer, a mathematician and a statistician - and not of traditional experts.

In fact, in large data, the truth lies: it is not by chance that algorithms that offer (probabilistic) results unsatisfactory with limited amounts of data, work wonders when applied to larger numbers.

"Google Translator" provides a clear example of how the probabilistic criterion combined with the amount of information can be applied to solve a complex problem such as translation.

The program, in fact, does not translate by applying the grammar rules or using the stored dictionaries, but based on the probability that the content of a given document can be translated according to the grammatical structures and meanings of words, verbs and adjectives present in the billions of documents, in all the languages, which he has in his memory.

In this way, the program won the competition with Microsoft and quickly became the most used translator in the world.

In this context, as already mentioned, the computing capacity represents only a part of the process, not even the most important one, just like the algorithms that are used from time to time. The determining factor remains the number of data available: the more we have, the greater the chances we have of finding what we are looking for.

The BIG DATA "give wings" to the fourth industrial revolution, and allow a greater understanding of the world. Learning to manage them and use them to the fullest is the challenge that awaits us.

3BIG DATA by Viktor Schonberger and Keneth Cukier - Garzanti 2013

4A system is called "automated" when it acts primarily in a deterministic manner, always reacting the same way when subjected to the same inputs. An "autonomous" system, on the other hand, reasons on a probabilistic basis: having received a series of inputs, it elaborates the best answers. Unlike what happens with automated systems, an autonomous system, with the same input, can produce different answers.

Photo: Emilio Labrador / NASA